Good engineering is mostly invisible. When it's working, operations teams move faster, contracts stay clean, and the machinery of servicing hums along without anyone noticing. That's the standard Point's engineering teams hold themselves to — not just shipping features, but building the kind of infrastructure that makes everything downstream better.

That philosophy comes to life in the details — the systems that reduce friction and quietly improve outcomes across the business.

Here are two projects that show how Point engineers think in practice:

Medei Kitagaki walks through how we’ve leveraged AI in our Title Extraction project to automate one of the most complex, high-stakes steps in our underwriting workflow. This moves beyond hype — cutting missed findings from 22-26% down to 4-8%. Ryan Minniear introduces Contract Sentinel, a layered quality engine that continuously monitors contracts for issues, routes them to the right teams, and resolves them cleanly.

These aren't flashy consumer features. They're the kind of pragmatic engineering that makes Point better at what it does — helping homeowners close sooner, delivering cleaner deals for our capital partners, and driving operational efficiencies that reduce costs.

Automating title extraction with LLMs at scale

In 2025, we delivered a major leap forward in underwriting efficiency with the launch of Title Extraction, a production-grade system that automates one of the most complex, error-prone steps in real estate servicing: identifying title findings from unstructured title reports.

The problem: high-variance, high-stakes data

Title reports enumerate liens and encumbrances on a property — information that must be precisely captured to ensure a clear title for customers and to meet the risk requirements of our capital partners. Historically, this work required highly skilled Underwriters to manually review PDFs, extract findings, and enter structured data across a multi-stage workflow with numerous handoffs and verification steps. Accuracy is non-negotiable, but the process introduced significant latency and limited our ability to scale.

Why we chose LLMs

The technical challenge was formidable. Title reports vary wildly in structure and language across more than 3,200 U.S. counties, making traditional parsers or fine-tuned ML models impractical to build and maintain. Instead, we leaned into large language models (LLMs) and their ability to reason over unstructured natural language. The goal was not to “replace” expert judgment, but to automate the deterministic, repeatable parts of the workflow while preserving correctness and regulatory rigor.

One of the earliest and most important challenges was defining what “success” meant for a non-deterministic system. Unlike traditional software, there is no single correct output for an AI model. We had to be intentional about what “good” looked like in practice. The team set a clear target of 90% precision and designed the system around rapid, measurable iteration.

Rather than judging individual outputs, we focused on tracking performance trends over time, testing changes quickly, and understanding whether they meaningfully moved key metrics. This made it far easier to decide which ideas were worth pursuing and which were not.

From pilot to production

Development progressed in two phases. An initial hackathon prototype validated that LLMs could reliably identify certain classes of findings. Still, it also revealed sharp performance cliffs: LLMs excelled at some tasks while failing unexpectedly at adjacent ones. These learnings informed a second, production-focused iteration, in which we designed explicit guardrails, verification steps, and post-processing logic based on the model’s known strengths and weaknesses. This required a deep, empirical understanding of LLM behavior rather than treating the model as a black box.

Building a safe path to real-world evaluation

A critical enabler was the creation of Point’s first AI Sandbox, a secure, InfoSec-approved environment for working with production data and documents. Evaluating LLMs on real-world data is essential, but difficult: title reports contain sensitive PII, and synthetic data fails to capture the variability and edge cases that drive model performance. Many teams are forced to choose between safe but unrealistic testing environments or fast but non-compliant workflows.

The sandbox resolved this tradeoff. It enabled rapid iteration on prompts, parameters, and validation logic using real documents without compromising data security — allowing us to rigorously measure performance and converge on a reliable solution.

Impact: beyond time savings

Today, Title Extraction achieves 93.4% precision (our goal was 90%) on eligible findings, with 82% of automated findings accepted by users, and continues to improve as confidence and usage grow.

The impact extends well beyond initial task automation. While direct task execution time decreased by 16%, the more significant gains came from reducing downstream rework. Missed findings — which previously occurred in 22–26% of cases and drove costly revisions and workflow churn—have dropped to just 4–8%. This reduction compounds across the entire servicing lifecycle, shortening cycle times, increasing confidence in early-stage decisions, and materially improving overall throughput.

Beyond the feature itself, this project intentionally laid a reusable foundation. The AI Sandbox, evaluation approach, and development patterns established a repeatable model for deploying LLM-powered automation safely and efficiently. Title Extraction is not just a single win — it’s a blueprint for how Point can scale AI-driven workflows in 2026 and the years ahead.

Contract Sentinel — building a quality engine from composable layers

At Point, the quality of our contracts is mission-critical for our homeowners, our investors, and the diligence processes that connect them. Among the projects our Contract Ops engineering team shipped in 2025, Contract Sentinel stands out for the design challenges it presented. It's a subsystem within our Servicing platform that continuously monitors contracts for quality issues, surfaces them as actionable tasks, and tracks them through resolution.

For example, a missing subordination agreement might be flagged by a checker, mapped into a high-severity quality issue, and automatically create a task with a due date for the appropriate operations team.

The project's north star: all contracts are diligence-ready at any time.

The Pipeline

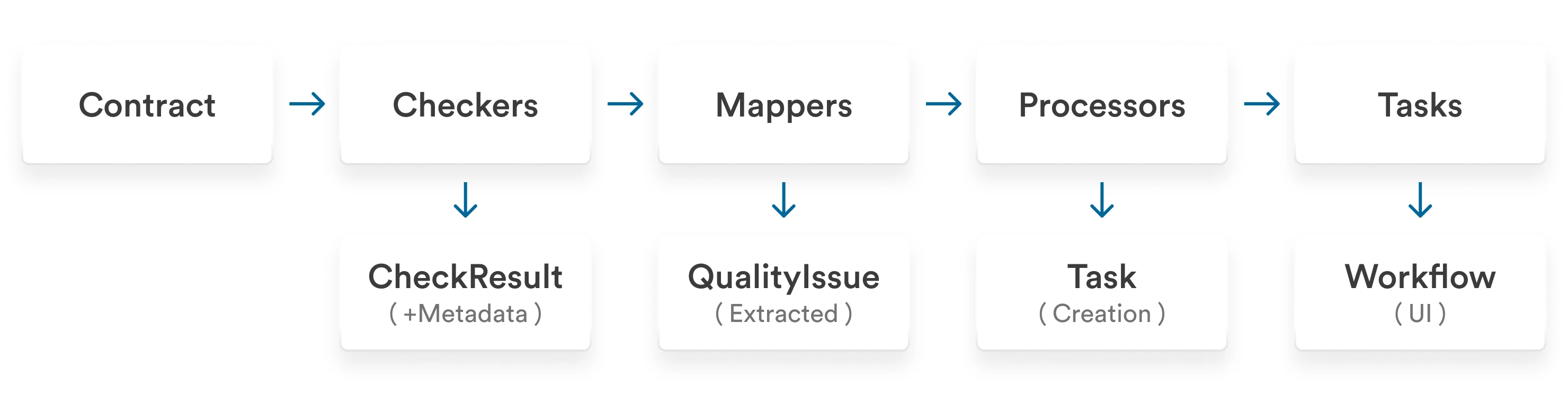

Monitoring end-to-end quality of an asset-backed security is an enormous task: we have to ensure that our documentation is complete, correct, and compliant in a dynamic operational and regulatory environment. The core design decision that enabled us to achieve this was to decompose the problem into four distinct layers, each with a single job:

Checkers inspect a contract and return a pass/fail result along with rich metadata. A document presence checker, for example, returns which specific documents are missing and what's required. Checkers know nothing about issues, tasks, or what happens downstream.

Mappers translate between check results and quality issues. This layer is easy to overlook or fold into the checkers themselves, but it carries a lot of weight. In the forward direction, a single failed check can surface multiple distinct issues, each with its own severity. But mappers also work in reverse: on subsequent assessment runs, they diff current results against existing issues. They deduplicate what's already known, confirm what persists, and identify issues that are no longer present so they can be auto-resolved.

You could argue that these are two separate responsibilities that deserve separate classes. The tradeoff we made was to co-locate them: all the knowledge of how a specific check type's metadata relates to its issue types lives in one place. The alternative, splitting forward mapping and reverse diffing across different classes, risks those two interpretations of the same metadata structure diverging over time. Keeping them together made the system easier to reason about and extend.

Processors take unresolved quality issues and decide what action to take, typically creating a task for the operations team with severity-based due dates.

Tasks are already a fundamental concept in our Servicing platform. Operations teams work tasks for default events, compliance workflows, and other processes. Rather than building a separate resolution system, Contract Sentinel introduces Quality Tasks that build directly on top of this existing infrastructure. Quality issues flow into the same familiar system. Resolution works in both directions: when a team member completes a task, the underlying quality issue is automatically marked resolved. Conversely, if a subsequent quality assessment finds the issue is already gone, the system cancels the now-unnecessary task.

Extensibility in practice

This layered approach makes the system naturally extensible. We saw this firsthand when we built our new Securitization Diligence tool on top of Contract Sentinel. Diligence adds domain-specific document checks — 17+ document types with conditional requirements — by registering its own Checker, Mapper, and Processor classes. The pipeline runs them seamlessly alongside the originals, with no changes to core Contract Sentinel code. In effect, an application of the Open/Closed Principle — the system is open for extension without requiring modification.

With the framework in place, we're now building out more comprehensive document checks and adding cross-record consistency checks across related models.

AI-ready by design

Contract Sentinel is traditional business logic: deterministic, auditable, easy to test, and reason about. That was intentional. But the same architecture that makes it easy to work with also makes it a natural home for AI-powered checkers and mappers.

A checker that determines whether a subordination agreement is structurally valid is well-suited to rules-based code. A checker that needs to read an unstructured title commitment, interpret its exceptions against property-specific context, and flag compliance gaps is not. Fitting that kind of judgment into a rigid rules engine means either oversimplifying the check or writing a sprawling conditional tree that's hard to maintain and brittle at the edges.

Because checkers and mappers are self-contained classes with clean interfaces, dropping in an LLM-backed implementation requires no changes to the pipeline. The checker calls a model, returns a typed result, and the rest of the system handles it identically. Complex document understanding, regulatory interpretation, and cross-record reasoning all become viable checks without touching core Contract Sentinel code.

The takeaway

Building Contract Sentinel by folding all the logic into one place would have been faster to start — one service checks, decides, and acts. Done. The team would have shipped something working, and then spent the next year paying for it.

Instead, they decomposed the problem into layers with clean interfaces, and that choice compounded in ways that became clear during development. When Securitization Diligence came along with 17+ document types and conditional requirements, the team registered new Checker, Mapper, and Processor classes, and the pipeline absorbed them without a line of change to core Contract Sentinel code. When a checker's behavior needed adjustment, the fix stayed contained — no need to trace logic across a tangled service to understand what would break. When they wanted to test a new check type, they could do so in isolation against real cases before wiring it into the rest of the system.

The reverse-diffing logic in the Mapper layer is a good illustration of where the upfront design work paid off. It would have been easy to fold that into the checkers — cheaper in the short run, harder to reason about over time. Keeping it co-located with the forward mapping meant the knowledge of how each check type's metadata relates to its issues stayed in one place. Engineers who come to this code in a year will find it consistent.

Complex workflows get built as tightly coupled solutions because the alternative takes more thought before you write code. Contract Sentinel is evidence that the tradeoff is worth it.

Closing

Medei's team reached for an LLM not because it was novel, but because title report variability across 3,200 counties was genuinely unsolvable without one. Ryan's team decomposed Contract Sentinel into layers not because it was faster, but because they were thinking about the engineers who would maintain and extend it over the coming years. Both teams understood the problem deeply before they wrote code.

These two projects are a small window into a much larger body of work. The same discipline showed up across every surface of Point's platform in 2025 in evaluation strategy, system decomposition, and knowing where not to use AI at all.

That is the culture Point is building.

No income? No problem. Get a home equity solution that works for more people.

Prequalify in 60 seconds with no need for perfect credit.

Show me my offer

Frequently asked questions

Thank you for subscribing!

.webp)

.webp)